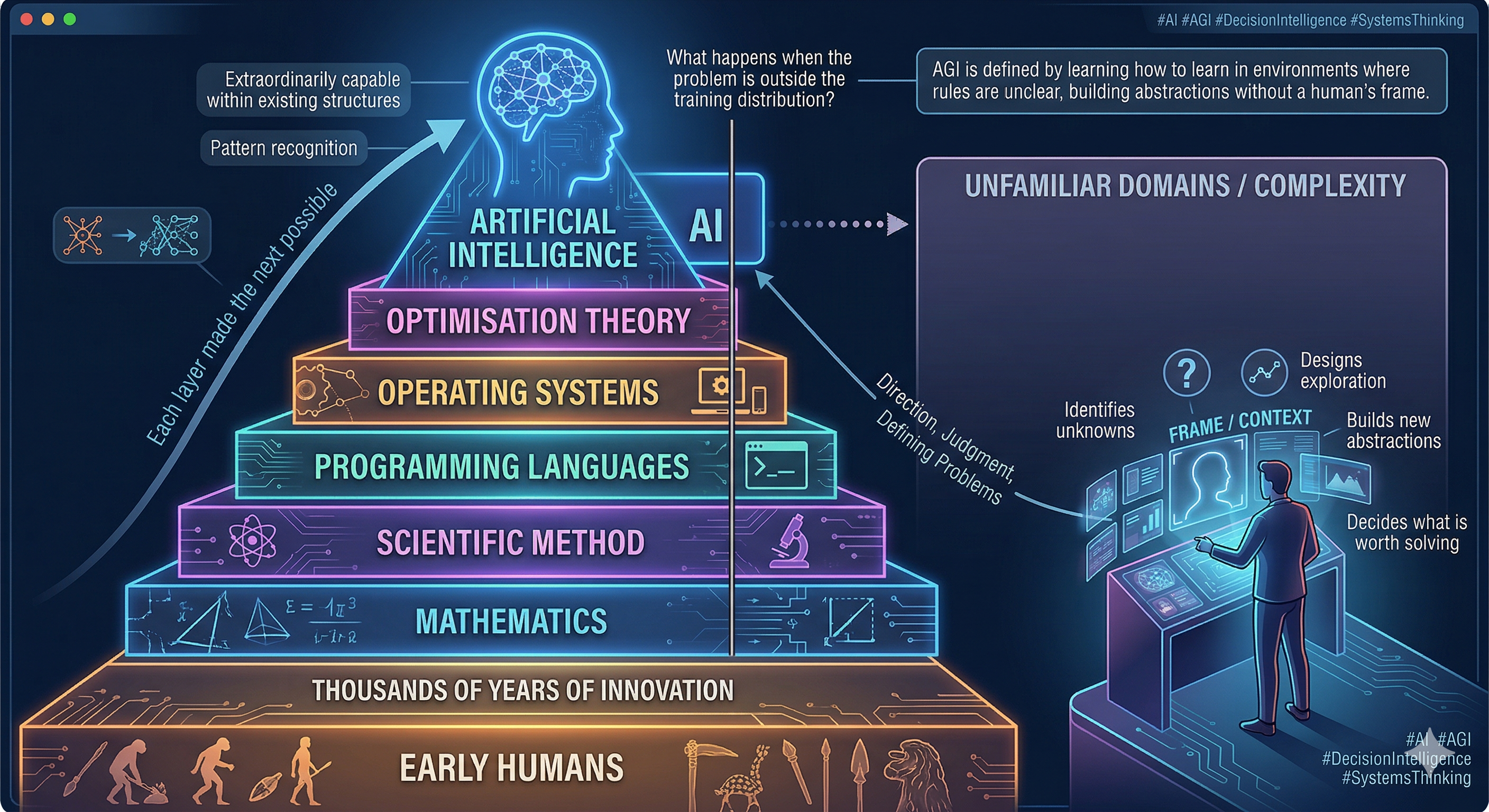

Humans Invented the Frame

Humans went from nothing to AI over thousands of years. Not by optimising existing ideas. By inventing entirely new ones.

Mathematics. Programming languages. Operating systems. Optimisation theory. The scientific method.

Each layer made the next layer possible. AI is a remarkable achievement built on top of all of it.

But here is the test that matters for any claim of AGI:

In most real-world settings, when AI encounters something sufficiently unfamiliar, it struggles, degrades, or quietly depends on a human to redefine the problem.

Because humans don't just solve problems.

We decide what is worth solving. We frame the problem. We invent new abstractions when existing ones fail. We recognise when the objective itself is wrong.

We don't operate within a model. We create the model.

Current AI systems are extraordinarily capable within structures humans have already formalised — language, logic, code, documentation, structured decision problems.

But real-world complexity lives elsewhere. Physical constraints. Biological feedback. Long-horizon consequences. Evolving incentives. Objectives that nobody has yet defined clearly enough to optimise.

These domains don't just require better pattern recognition.

They require the ability to learn how to learn — in environments where the rules themselves are unclear.

AGI, if it emerges, won't be defined by benchmark performance.

It will be defined by whether it can identify what it does not understand, design ways to explore it, and build new abstractions from scratch — without a human handing it the frame.

Until then, AI remains powerful precisely because humans provide what it cannot: direction, judgment, and the willingness to operate in the unknown.

We provide the frame.

AI accelerates what fits inside it.